Deterministic vs. Predictive AI: Why the Best CX Systems Use Both

Most discussions about AI in customer service skip a step. They jump straight to capabilities and outcomes, emphasizing things like automation rates, deflection metrics, cost per contact, and so on. But they miss a more foundational question: what kind of AI is doing the work?

That question matters more than most people realize. Deterministic AI for customer service operates very differently from predictive AI. Failing to understand the AI underlying your automation means you won’t know what system can guarantee, what it can only estimate, and where it will pose risks under pressure.

What is Deterministic AI?

Deterministic AI follows explicit, pre-defined logic. Given the same input, it produces the same output. Every time. No variance, no interpretation, no surprises.

In practice, this looks like rule-based systems, decision trees, and workflow automation. A customer submits a refund request for an order under $50 with a valid reason code? The system issues the refund. A billing inquiry arrives after hours? It routes to the overnight queue. The logic is written in advance, and the system executes it.

Where deterministic logic excels:

- Compliance-sensitive processes where specific actions must happen in a specific order.

- Policy enforcement that cannot vary based on conversational context.

- Calculations (pricing, refund amounts, etc.) that must remain consistent.

- Escalation triggers that need to fire reliably.

- Audit trails, where every step in a workflow must be logged and explainable.

Where deterministic logic falls short:

- It can’t handle what it wasn’t programmed for, and novel situations expose the gaps immediately.

- Building and maintaining rule sets at scale is time-sensitive and brittle, and CX debt compounds every time the business changes a policy or adds a channel.

- It has no ability to interpret nuance, sentiment, or intent.

- Customer conversations are inherently variable and rigid scripts create friction.

Determinism is the AI equivalent of a legal contract. The terms are explicit. The execution is predictable. And when something goes wrong, you can trace exactly why.

What is Predictive AI?

Predictive AI — including the large language models that power modern AI agents — works differently. Rather than following a fixed rulebook, it learns statistical patterns from large amounts of data and generates responses based on probability.

This is what gives AI agents their conversational fluency. They can interpret ambiguous phrasing, detect emotional tone, handle topics they haven't been explicitly trained on, and adapt their response based on context.

Where predictive AI excels:

- Understanding natural language across channels, topics, and tones.

- Handling the long tail of contact types that no rule set could anticipate.

- Generating contextually appropriate, personalized responses at scale.

- Detective intent and sentiment signals to inform routing or escalation decisions, enabling proactive customer service.

Where predictive AI falls short:

- It can be flat out wrong, and often with high confidence, which makes errors harder to remedy.

- Outputs are non-deterministic: the same input can produce different results.

- “Black box” behavior makes it difficult to audit or explain specific decisions.

- Without guardrails, it can improvise in ways that create liability or erode trust.

Predictive AI fulfills the promises we always hear about AI: that it can act autonomously and truly replicate human behavior. But with autonomy comes the risk of inconsistency, which can be a major problem in customer-facing interactions.

The Problem with Over-Reliance on One Type of AI Over Another

Here's where most AI for CX implementation goes wrong.

Organizations that deploy pure predictive AI get conversational capability without control. The system sounds good until it improvises a policy exception, gives inconsistent answers to the same question, or handles a compliance-sensitive situation without the required disclosures. Confidence without certainty is a liability in customer-facing contexts.

Organizations that rely exclusively on deterministic logic get control without capability. Rule-based workflows handle the cases they were designed for and fail everyone else. The maintenance burden compounds as the business evolves. Customers who don't fit the decision tree get a bad experience.

This is the false choice that has defined the first wave of AI in customer service: either a rigid automation tool or an autonomous agent that can’t be reined in. Either control or intelligence.

Neither option reflects how customer service actually works.

Why Hybrid Reasoning is the Mature Approach for CX

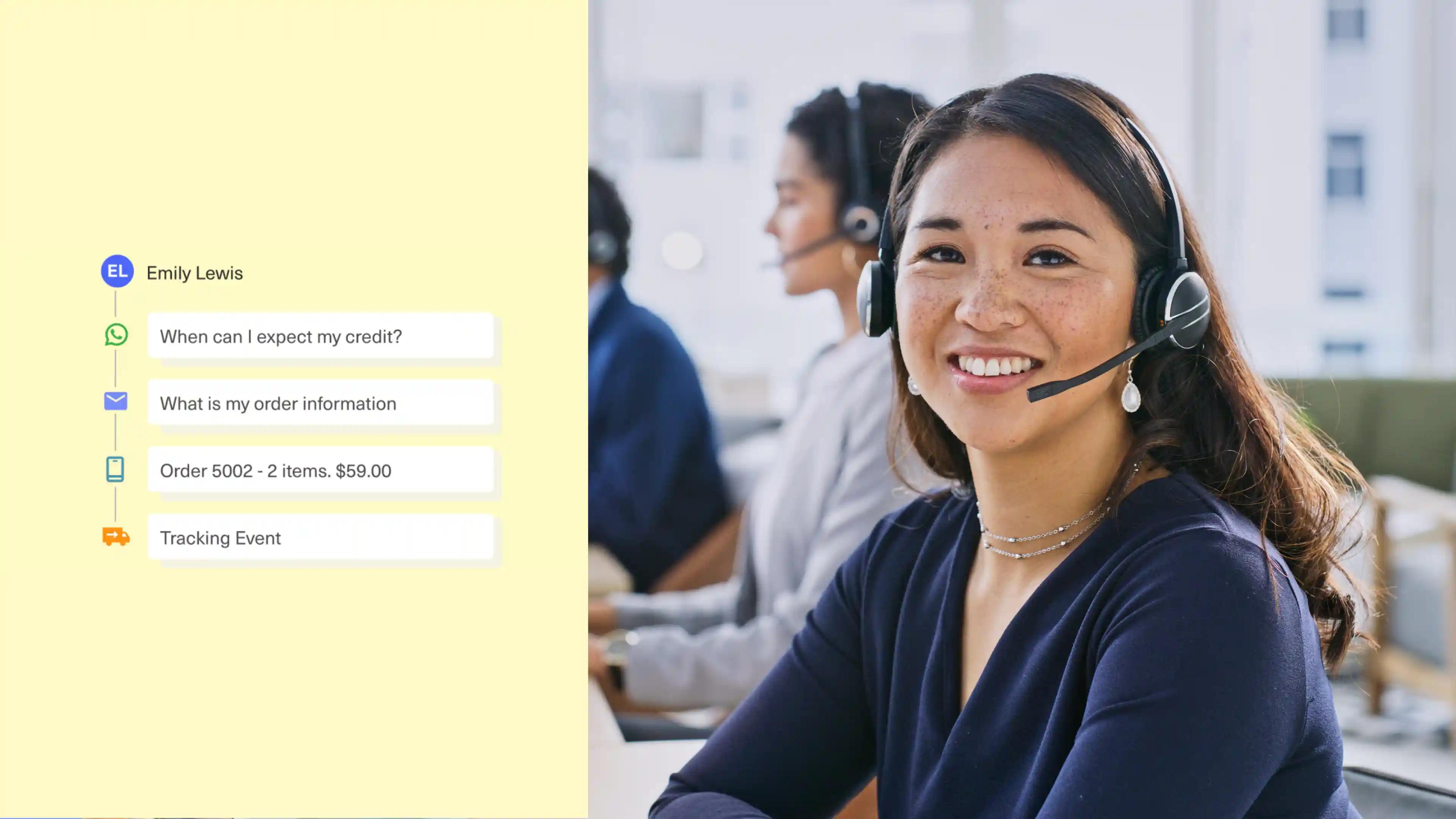

Customer service operations don't divide neatly into "things that need AI judgment" and "things that need fixed rules." They exist on a continuum, and the same conversation often requires both.

Here’s an example of what that might look like:

A customer initiating a return might start with a natural-language explanation of why the product didn't work for them. This is where predictive AI excels: interpret the intent, acknowledge the frustration.

But the refund eligibility check needs to run against the actual return policy. Deterministic logic kicks in: no exceptions without manager approval.

The confirmation message should feel personal and conversational, again relying on predictive AI to generate an appropriate response based on the customer context it has at its disposal.

And the refund action itself must fire exactly as specified in the fulfillment workflow, again relying on deterministic logic.

These aren't competing approaches. They're complementary layers in the same system.

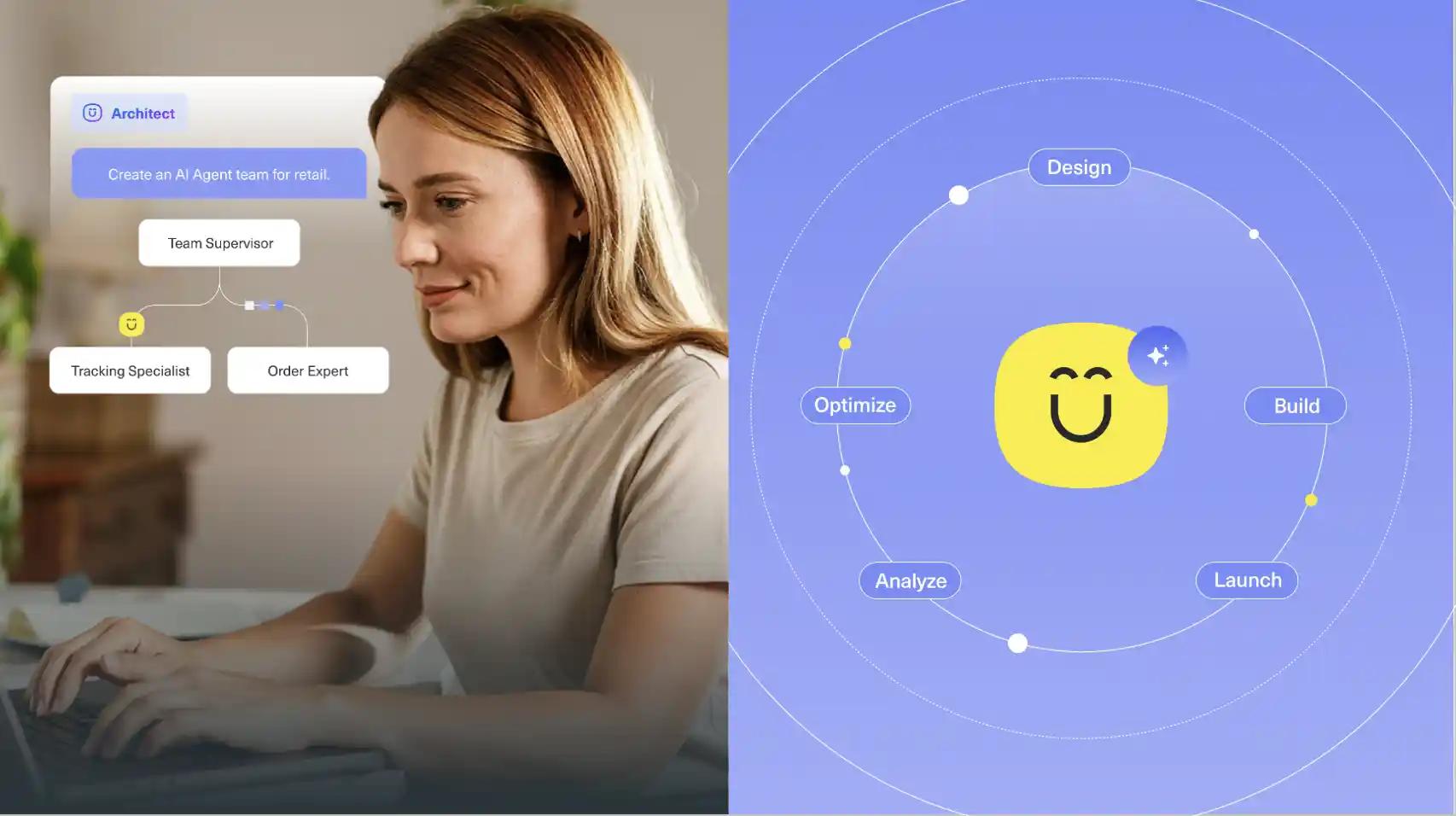

What an AI reasoning engine actually enables:

- Predictive AI handles the conversation: understanding context, interpreting language, generating responses.

- Deterministic logic enforces the non-negotiables: policy steps, compliance requirements, precise calculations.

- The system can navigate multi-step branching logic across an entire conversation, routing through conditions as they're met.

- Both layers are observable: you can see what the AI decided, why, and what deterministic logic ran next.

- Businesses control the flow, deciding which moments require AI judgment and which require guaranteed execution.

This architecture answers the question that pure AI platforms struggle to address: why should the AI be the only thing that gets to decide when an action fires? In a hybrid system, the business sets the terms. The AI operates within them.

How to Evaluate AI Systems Against This Framework

If you’re assessing AI for your CX operation, or auditing what you already have, here are the questions that matter.

1. Can the system guarantee specific outcomes?

There are moments in customer service where "probably right" isn't good enough (regulatory disclosures, refund approvals, SLA escalations).

If your AI platform can't give you a hard guarantee that a specific action will fire when a condition is met (regardless of what the AI infers from the conversation) that's a gap worth understanding. AI agents built for CX should be able to handle the full resolution sequence without improvising around your policies.

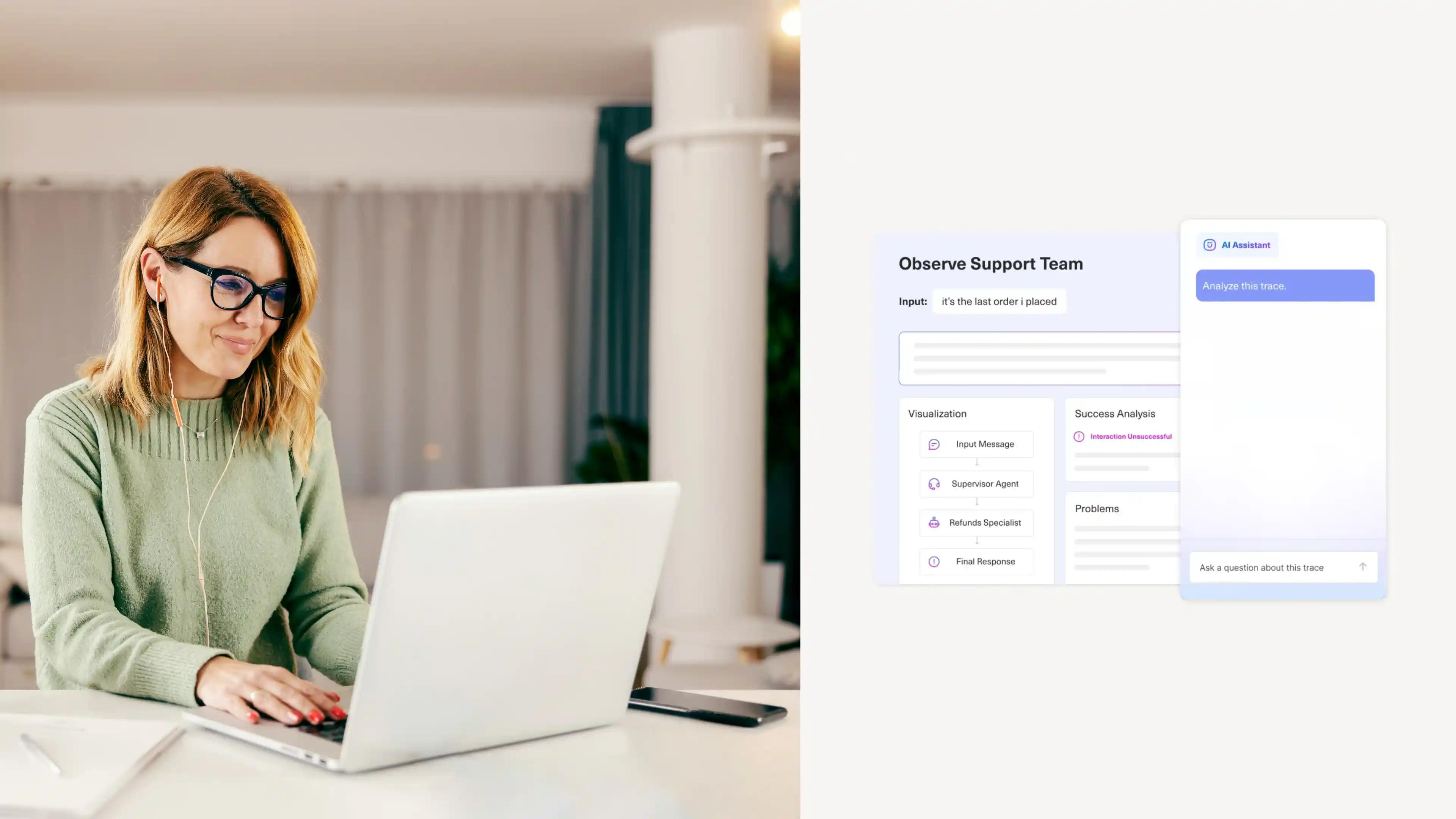

2. Is the reasoning observable?

After a conversation completes, can you see why the AI did what it did? Which steps ran, in what order, and why? Observability is a prerequisite for accountability, especially in regulated industries — and a system that can't explain itself is a system you can't confidently scale.

This is precisely why AI observability has become a first-order concern for CX teams moving from pilot to production. The same principle applies to your data: CX analytics should tell you why something happened and what to do next, not just log that it occurred.

3. Who controls the logic?

The most important question isn't what the AI can do, it's who decides the boundaries. Can your team define which paths are AI-driven and which are locked to deterministic execution? Or does the platform make that call?

CX organizations operating at scale need genuine governance over their automation: the ability to audit, adjust, and override, not just a dashboard showing what the AI did after the fact.

4. Does the architecture handle complexity or avoid it?

Real customer journeys aren't linear. They branch, escalate, loop back, and involve multiple systems. A platform designed around single-turn responses will paper over that complexity with workarounds. A platform designed for multi-step AI automation with conditional logic will handle it natively.

5. What happens at the edges?

Every AI system has a competence boundary. The question is what happens when a conversation crosses it. Does the system escalate gracefully, with full context handed to a human agent? Or does it fail in ways that damage the customer relationship?

Human-in-the-loop design, where humans can step in, resolve the complex part, and hand back to AI to close, is what drives true consistency in experience. The quality of the edge is as important as the capability at the center.

A Modern AI Reasoning Engine Unifies Deterministic and Predictive Intelligence

Deterministic logic and predictive AI may be distinct concepts, but don’t think of them as mutually exclusive technologies. As AI continues to evolve, both in its power and in its breadth of use cases, it’s important to understand the fundamentals behind different types of AI – and more importantly, how they complement one another within the best solutions.

The organizations getting the most out of AI-powered CX aren't choosing between control and capability; they're building systems where deterministic and predictive intelligence coalesce into a seamless experience.

Want to learn more about Kustomer’s AI Reasoning Engine? Check out this post: Kustomer AI: A New Era of AI for Customer Experience.