Confident AI Agents, Start to Finish: Evaluations and Live Validation in Kustomer

Two new capabilities in Kustomer give your team full visibility into your AI agents, before they go live and during every conversation.

Deploying an AI agent is a leap of faith. You've configured it, written the procedures, mapped the flows. But the moment it goes live, a question lingers: is it actually doing what I think it's doing? And if something goes wrong, will I know, and will I know why?

For many CX teams, the answer to both is no. Testing before launch is manual and hard to do well. And once an agent is live, what's happening inside each conversation is largely invisible until after the conversation is closed. You see the outcome, not the reasoning. You find out something went wrong when a customer tells you.

We built two capabilities to change that. Together, they give your team confidence before your agent ever meets a customer, and live visibility into every conversation after it does.

Before You Go Live: Test Your Agent With Confidence

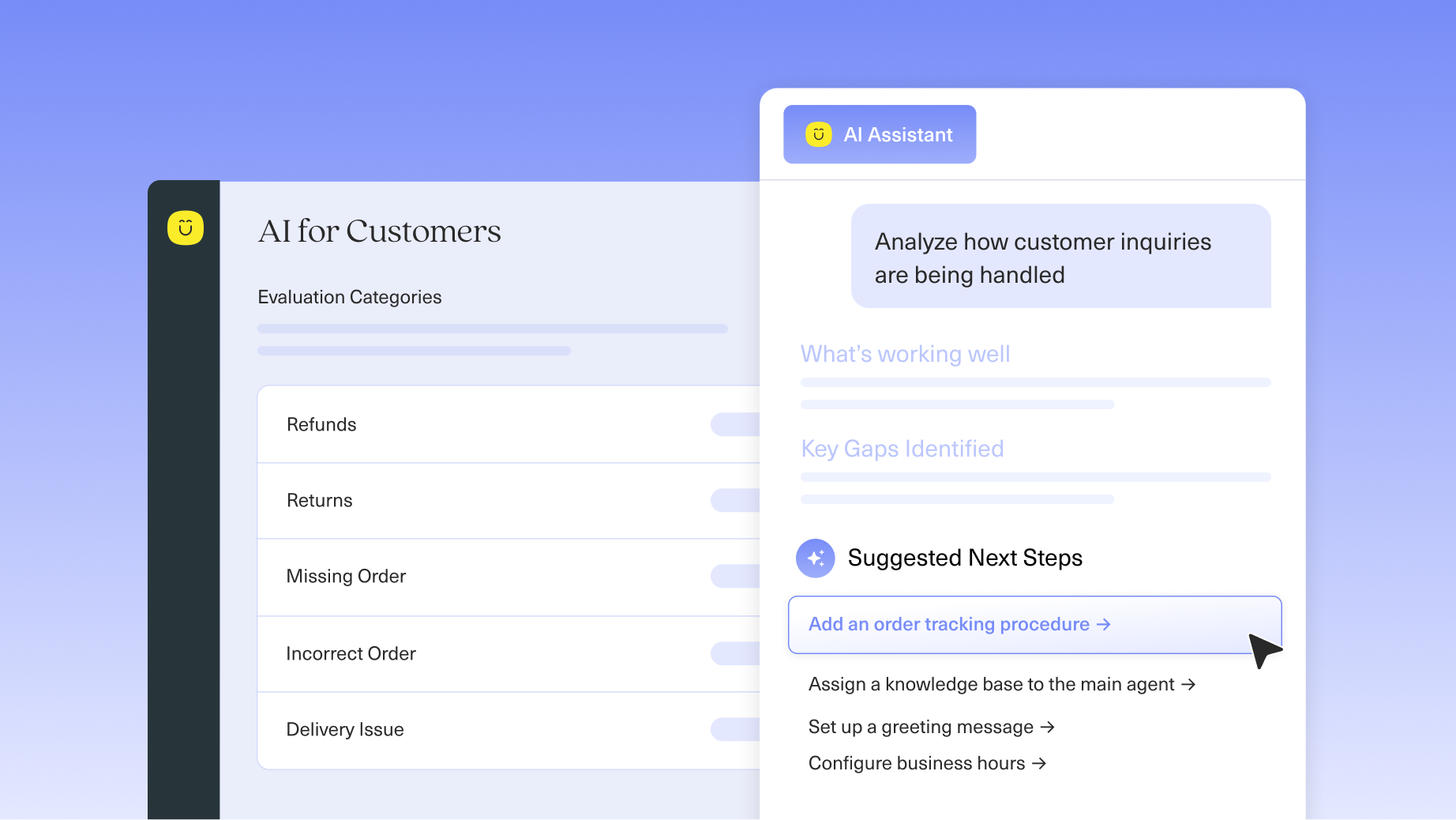

The AI Agent Assistant now includes evaluation capabilities, so the same assistant that helps you build and configure your agents can also help you test them. It analyzes your existing automations, understands the use cases they cover, and generates eval tests tailored to your specific setup. No templates to fill in. No technical expertise required.

You can also bring traces from past conversations where things didn't go as expected. Point it at a failure, and it reads through the data, identifies root causes, and surfaces patterns. Then it tells your team what to look at, whether that's a procedure that needs updating, a prompt that needs tuning, or a gap in your test coverage. It even proposes new tests to close that gap, so the same issue doesn't slip through again.

- Assistant generated evaluations: Analyzes your automations and writes evaluation tests matched to your actual use cases, tests that run immediately.

- Trace analysis: Bring it a conversation that didn't go right and it identifies what went wrong and why, across patterns, not just one-off incidents.

- New test suggestions: After diagnosing a failure, it proposes new tests to close that gap before your agent goes back out.

- Run and analyze: Kicks off test runs and analyzes results, then feeds findings back into your build, test, deploy loop.

While It's Live: A Quality Coach Inside Every Conversation

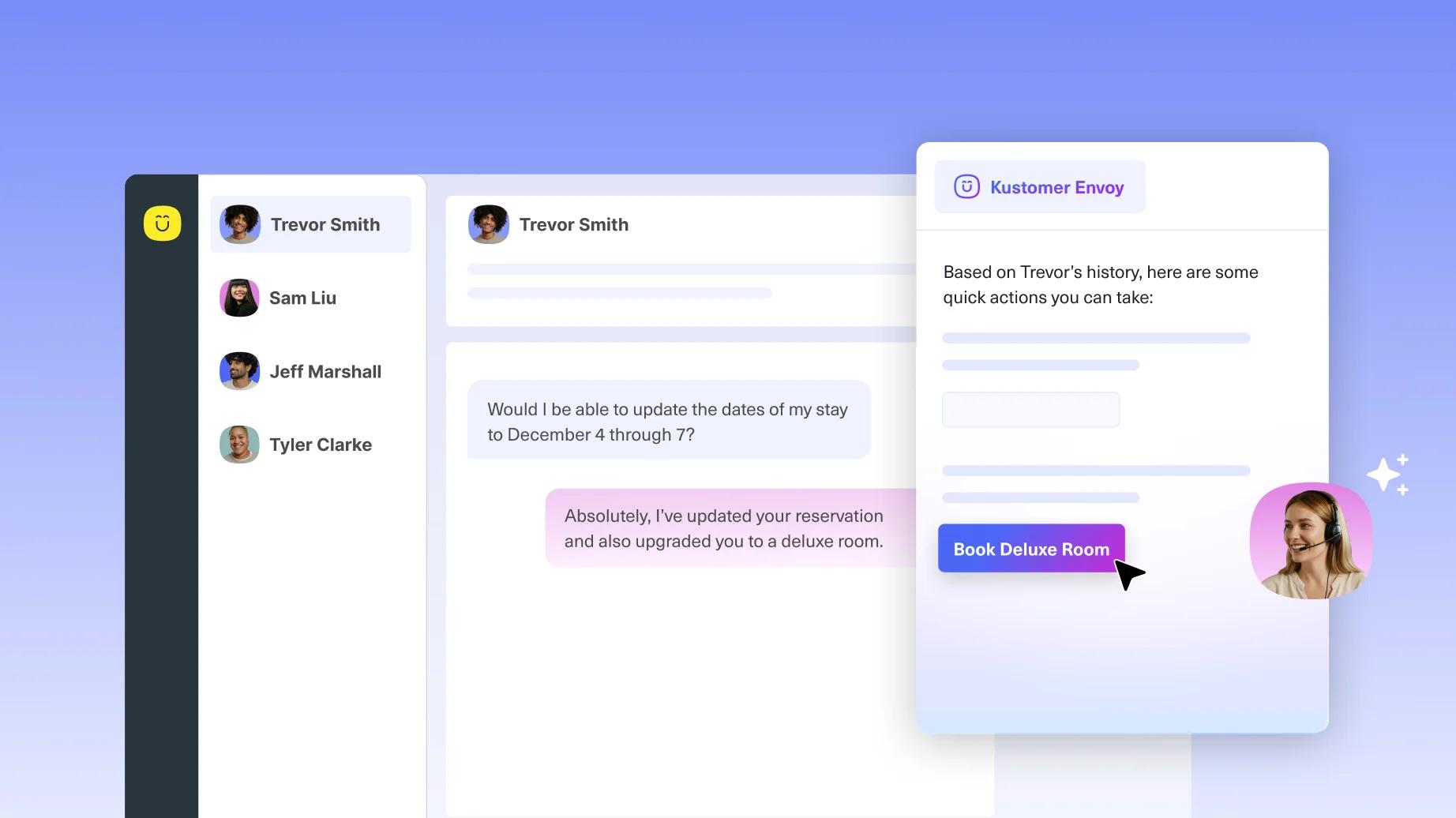

Once your agent is live, a second capability takes over. Built directly into the Kustomer agent engine, our live validation works step by step through every conversation, evaluating each action your agent takes and explaining why it made that decision. Think of it as a quality coach with a headset, watching every play in real time.

At each step, the validation reviews what the agent just did, confirms it was the right move, and passes guidance forward to inform the next response. It's a reinforcement loop designed to keep the agent on track. And if at any point the agent reaches a state where it can't help the customer, the validation catches it and routes the conversation down a defined fallback path, so nothing falls through the cracks.

- Step-level observability: See exactly what your agent did at each point in a conversation, and the reasoning behind it, not just the final outcome.

- Live reinforcement: Validation findings are fed forward to the agent in real time, keeping it aligned with your procedures throughout the conversation.

- Failure detection: If the agent reaches a dead end, the validation flags it immediately and triggers your defined escalation or fallback workflow.

- Trace storage: Every conversation trace is stored, giving you the data you need for future analysis and continuous improvement.

The Complete Loop: Prevention, Detection, Correction

These two capabilities are designed to work together. Before launch, evaluations help you catch problems before your customers do. During live conversations, the validation ensures your agent stays on track and gives you step-level visibility into everything it does. And when something does go sideways, the traces it generates become the input for your next round of evaluations, closing the loop.

Most AI failures aren't mysterious. They happen one step at a time, and with the right visibility, they're preventable. That's why we built step-level reasoning into the core of how Kustomer agents work. Your team can see not just what happened in a conversation, but why your agent made each decision along the way. That kind of clarity doesn't just help you fix problems faster, it helps you build agents your team can actually trust.

Availability

Both evaluations for the AI Agent Assistant and live validation are available now inside Kustomer AI. If you're already a customer, you can start using them today. Reach out to your Customer Success Manager if you'd like a walkthrough or have questions about getting set up.

Not yet on Kustomer? Schedule a demo to see these capabilities in action.