The CX AI Maturity Model: 4 Stages to AI-Driven Customer Service

Most CX leaders can describe what AI-driven customer service is supposed to look like: faster resolutions, fewer repetitive interactions landing on agents, a support operation that scales without headcount growing at the same rate.

What's harder to articulate is where an organization actually is in their journey to being AI-driven. They might have implemented certain AI capabilities and successfully automated a number of tasks that their reps used to perform manually. But the experience still feels reactive and limited.

Many companies face this challenge, implementing AI but never becoming AI-driven. In fact, more than 80% of AI projects fail to reach meaningful production deployment – roughly twice the failure rate of non-AI technology projects. The root causes are consistent: poor data quality, use cases that aren't tied to business outcomes, and infrastructure that can't support the next stage.

In this article, we'll examine these issues and introduce a four-stage CX AI Maturity Model that exists to solve for them.

What "AI-Driven CX" Actually Means

Before walking through the stages, it's worth establishing what the destination looks like, because "AI-driven" gets used loosely.

Being AI-driven in CX isn't measured by the number of tools deployed, or by individual metrics like deflection rate. It's measured by whether AI is integrated deeply enough into your operation to drive outcomes that matter to the business: retention, revenue generated through service, cost per interaction, and resolution quality at scale. These consistent, scalable business outcomes is what the four stages of AI maturity are building toward.

Remember: Implementing AI in customer service and becoming AI-driven are different things. Organizations that skip stages, jumping straight to autonomous AI without unified data or a well-designed agent layer, tend to end up with impressive demos and flat results. The maturity model prevents that by being honest about what each stage actually requires.

The 4 Stages of the CX AI Maturity Model

Here is what each stage looks like in practice, what capabilities define it, and the signals that tell you where your org currently sits.

Stage 1: Foundational (RAG-Enabled Information Retrieval)

What it looks like: A chatbot pulls answers from a knowledge base. Agents get basic in-conversation prompts. The AI retrieves. It does not reason, personalize, or act.

In this stage, basic AI deflects simple, repetitive contacts – which can be very useful for CX organizations looking to become more efficient. But the ceiling arrives quickly. Foundational AI only knows what it was pointed at. It has no view of customer history, loyalty status, or prior contacts. Anything outside its training scope drops to a human, usually without context.

Core capabilities: RAG-powered chatbots, knowledge base lookups, basic agent assist prompts.

KPIs that matter here: Deflection rate, agent assist usage rate.

Signs you're here:

- Your AI handles FAQs but fails anything requiring judgment.

- Routing is keyword-based.

- Agents still manually compile context before or after every escalation.

- Deflection rate is the only AI metric being tracked.

Stage 2: Automated and Personalized (Agentic Workflows Begin)

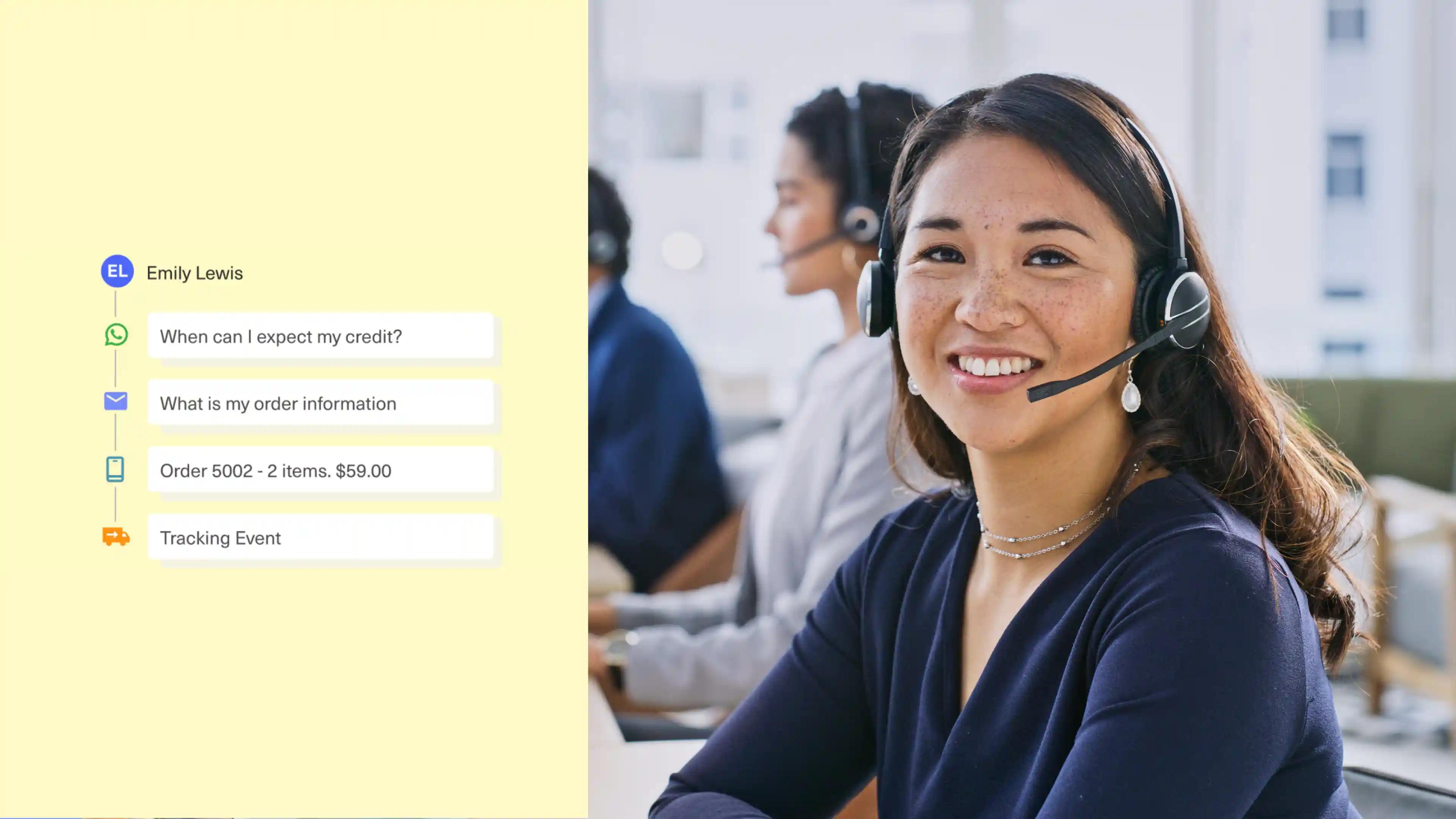

What it looks like: AI starts working with real customer context. It fetches live data, like order status, account history, and loyalty tier, and uses it to personalize responses and route intelligently. Simple agentic workflows emerge.

This is where most CX leaders feel the first meaningful return. Handle times drop because agents arrive informed rather than spending the first minutes hunting for context. Routing improves because the AI is reading intent and history, not matching on keywords. At Stage 1, AI answers questions. At Stage 2, AI equips people.

Core capabilities: Expanded RAG with live data retrieval, rule-based personalization, simple agentic workflows, real-time agent assist.

KPIs that matter here: Average Handle Time (AHT), First Contact Resolution (FCR), departmental ROI.

Signs you're here:

- Agents open conversations already informed.

- AI and human channels share the same customer data.

- You've moved beyond deflection as your only AI metric.

- Routing accounts for customer history and intent, not just topic keywords.

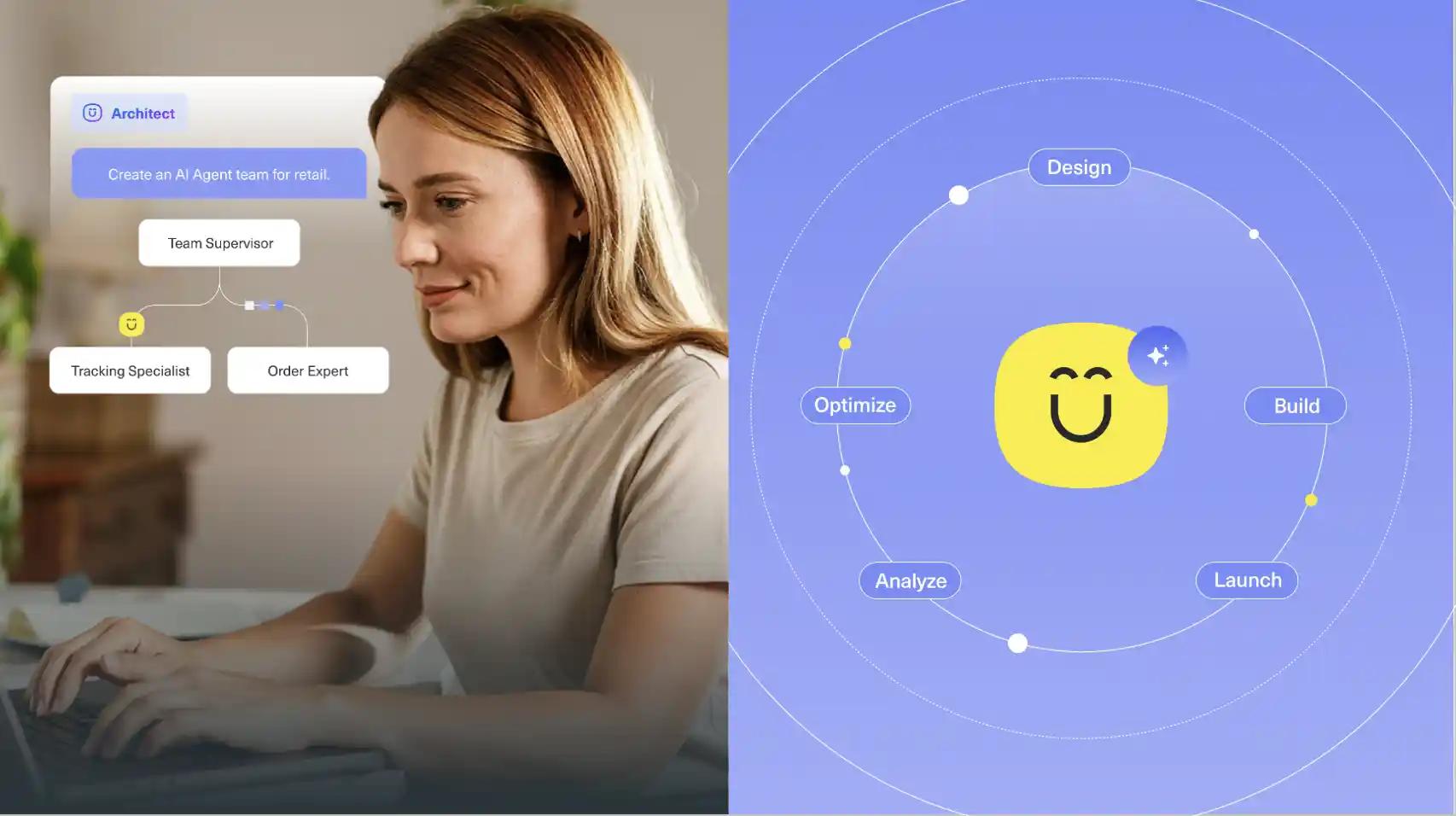

Stage 3: Integrated and Proactive (Multi-Agent, Autonomous Resolution)

What it looks like: AI agents handle complete workflows end-to-end without human handoff. They take action: processing requests, resolving issues autonomously, proactively reaching out based on signals in the data. Multi-agent systems coordinate on complex, multi-step problems.

The transition from Stage 2 is a shift in authority. Stage 3 is where AI has the true ability to act on behalf of the business, with humans supervising outcomes rather than driving individual interactions.

Getting here requires explicit decisions about what the AI is trusted to handle and what should still route to a human. Complexity, emotional context, customer tier, and policy sensitivity all factor in. Teams that build routing logic deliberately, before expanding AI scope, move faster and with far fewer quality problems than those that don't.

Core capabilities: Multi-agent systems, end-to-end autonomous resolution, predictive analytics, proactive customer service.

KPIs that matter here: Complex deflection rate, autonomous resolution rate, CSAT and NSAT impact.

Signs you're here:

- A meaningful share of volume resolves without a human touch.

- Escalations pass along full context so agents never start cold.

- You're measuring AI on outcome quality, not just whether it deflected.

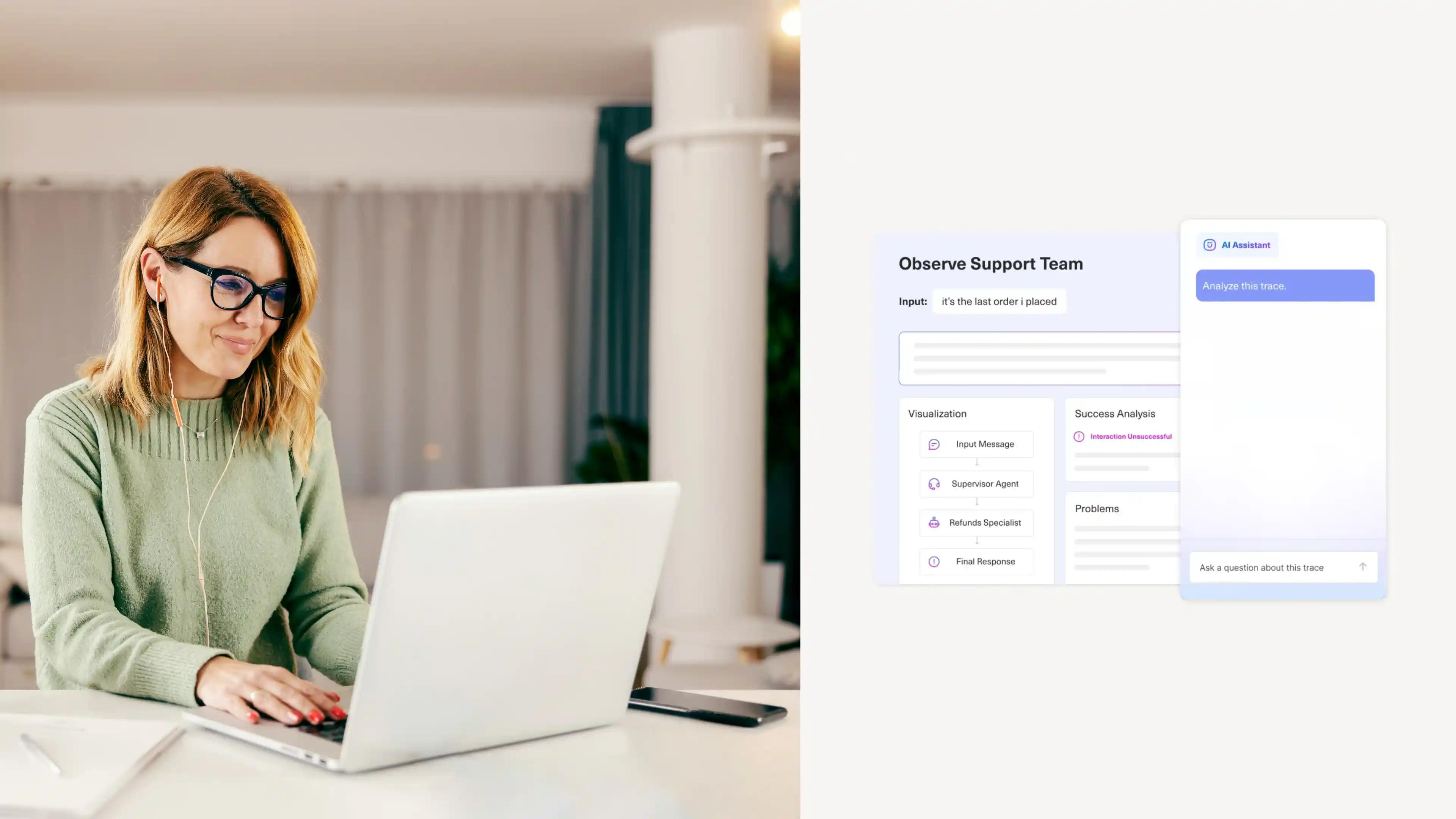

Stage 4: AI-Driven Enterprise (Optimized and Adaptive)

What it looks like: AI is not a channel or a feature. It is how the operation runs. Routing, quality assurance, knowledge management, workforce forecasting, and coaching all have AI beneath them. Self-optimizing agents improve continuously based on performance data. Human agents supervise AI and focus on the interactions that require genuine judgment and relationship.

This is also where AI-powered service has the potential to really drive revenue. When AI has access to full customer context, it has the ability to act on retention signals, surface upsell moments, and personalize in ways that compound loyalty over time.

Core capabilities: Enterprise-wide AI orchestration, self-optimizing agents, AI-driven QA at 100% conversation coverage, ethical AI governance.

KPIs that matter here: Enterprise productivity gains, LTV impact, revenue generated through service.

Signs you're here:

- AI QA covers every conversation, not a sampled subset.

- Your knowledge base identifies its own gaps automatically.

- CX data answers plain-language questions in real time, without a report export.

- Service is measured as a revenue function, not just a cost center.

Best Practices for Advancing Through the AI Maturity Model

No matter how far along you are in your AI journey, it takes specific actions in order to advance your organization to the next stage. You can't just decide to become an AI-driven organization and buy a bunch of tools to do so; you must first address the foundation you're starting with and the specific future you want to build.

1. Fix the data layer before adding capabilities (Stage 1 to Stage 2)

Plenty of organizations implement AI, get disappointed in the results, and then blame the AI. In most of these cases, the problem isn't the AI but what it's working with: siloed, incomplete data.

If agents are still pulling customer context manually from separate systems, your AI is missing that context too. The foundation has to be right before anything built on top of it can perform.

2. Tie every AI initiative to a measurable business outcome (All stages)

One of the most consistent root causes of AI project failure is deploying capabilities without a specific problem to solve. Before any new rollout, name the outcomes you are targeting: handle time, resolution rate, cost per contact, or customer satisfaction. Build measurement in from the start, not after.

3. Decide what AI should handle before you deploy it (Stage 2 to Stage 3)

The question is not only "can the AI handle this?" It is "should it?" given the complexity, emotional context, customer tier, and policy sensitivity involved. Building routing logic around that distinction before expanding autonomous scope prevents quality problems that erode trust. 38% of consumers say human oversight of AI decisions would directly increase their trust. Designing for escalation isn't just good engineering. It's good CX.

4. Keep human oversight close during new deployments (Stage 2 to Stage 3)

Expanding AI scope without expanding human review is how quality problems compound quietly. Teams should be reading AI transcripts regularly during any new deployment, not just monitoring aggregated metrics. Gartner found that 55% of organizations using AI are handling higher customer volumes with stable headcount, because the goal is augmentation, not replacement. Oversight is what makes that model sustainable.

5. Evolve your KPIs as you advance (All stages)

Deflection rate measures whether AI avoided a human. It does not measure whether the customer got what they needed or came back. As maturity advances, the metrics need to advance with it: basic AI efficiency metrics in Stage 2, more qualitative experience metrics in Stage 3, and more impactful revenue-centric figures at Stage 4.

This is also where CX analytics and AI maturity intersect directly. The metrics you track shape the decisions you make. Tracking lagging indicators keeps the bar low. Tracking outcomes raises it.

Start Where You Are, Build From There

AI maturity is not about reaching Stage 4 as fast as possible. It is about building each stage on a foundation that actually supports the next one. Stage 1 proves the concept. Stage 2 creates real efficiency. Stage 3 shifts what your team is capable of. Stage 4 compounds those gains across the entire operation.

The organizations pulling ahead are not the ones that deployed the most AI the fastest. They are the ones that built deliberately, measured honestly, and understood what they were actually optimizing for. Knowing your current stage is the clearest place to start.

Ready to see what AI-native CX looks like in practice? See how Kustomer helps CX teams progress through every stage of the maturity curve.